According to NCBI data, about 253,0,000 people globally have visual impairment, and 36,0,000 are blind. The American Foundation for the Blind is working on a study aimed at thinking about the technology experience of visually impaired employees, understanding challenges in workplaces and finding solutions for it. Technology is a great help for people with visual loss to reduce life experiences in various ways and AI has increased this help.

There is a need to go through various challenging situations to develop auxiliary technology for the visually impaired. However, there are solutions like apps and smartphones, with technology that can be genuine assistant wearable or physically more helpful in their lives. Many such helpful tech products are occupying space these days.

Recently an AI-powered bag is catching the eyes. Jagdish K Mahendran, an artificial intelligence developer, developed a portable support solution for people with visual loss with his team. The bag is powered by Intel’s advanced AI processor and it could cleverly replace a guided dog or sugarcane and help them navigate more easily around the world.

In a world where only limited auxiliary technologies are enabling free navigation for the blind, this voice-assisted bag is becoming revolutionary.

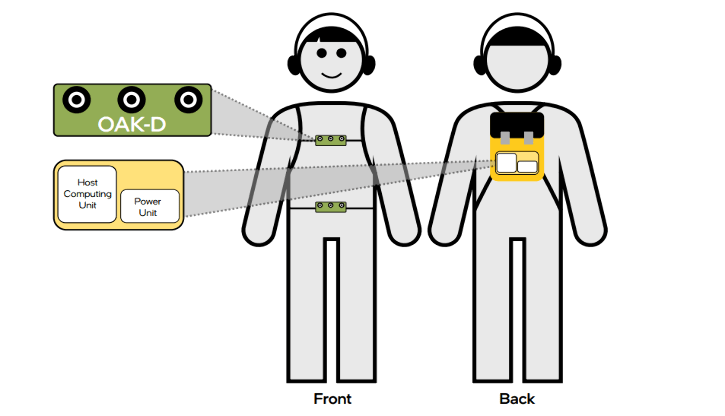

The system includes a laptop surrounded in a small bag, a vest jacket that hides the camera and has a pocket-shaped battery pack that can last for eight hours inside the waistband. The Luxonis Oak-D spatial AI camera can be fixed in a vest or waistband and connected to the laptop inside the bag.

According to Intel, the Oak-D camera unit is a powerful AI device running in intel delivery of open wino tool kits for Intel Movidios Vipu and on-chip edge AI infusing. Another unique character is its Bluetooth-enabled earphones, which can interact with users in oral instructions. Users can give an order or ask questions to voice assistants and it can guide them by notifying them against traffic signals, crosswalks, changing heights, stages and other obstacles.

Jagdish has created a great technology that provides reliable help to people with visual loss by introducing advanced AI technology and computer vision for a small carriable bag.

We now go through some other unconventional subsidiary techniques that we have recently appeared.

- OrCam MyEye, a voice-activated wearable device developed by an Israeli-based company. It can be virtually connected to any pair of glasses and can be read to users about any material on any surface and provides real-time hearing-in-visual information. This vision is suitable for people with any and all levels of difficulties or disadvantages. This wireless device can be connected via Bluetooth and can also be tyne speakers. They can identify and read people’s faces and inform users through attached optical sensors.

- OrCam Read is another pen-shaped device that helps people with disabilities and visual impairment in reading read both print and digital lying surfaces digitally. The AI-based subsidiary technology has won the CES 2021 Innovation Award. It works on text-to-speech reading engines, advanced computer vision and AI.

- Envision glasses is another advanced subsidiary technology based on AI. It can convert any kind of text into speech and support more than 60 languages. These smart glasses are designed for the visually impaired. AI-assisted software has been integrated into lightweight Google Glass to provide cost-effective visual support technology. Advanced AI and Optical Character Recognition Combine to provide a world view through technology speech. It can provide details of the scenes through audio, identify the faces of users’ friends and family, help find personal objects and make video calls to someone in real-time.

All these wearable AI-assisted techniques are comfortable, easy, reliable and acceptable. They work towards making the lives of visually impaired people easier and smarter with less dependence on other traditional methods. When people with visual difficulties are helped freely and by hand, it gives them immense freedom to see and understand the world.